Extend from Frame: Chaining Clips Beyond 15 Seconds

> Grok Imagine caps a single generation at 15 seconds. Extend from Frame, shipped March 2026, lets you take the last frame of one clip and feed it as the start frame of the next. Here is how it works, what drifts, and what it costs.

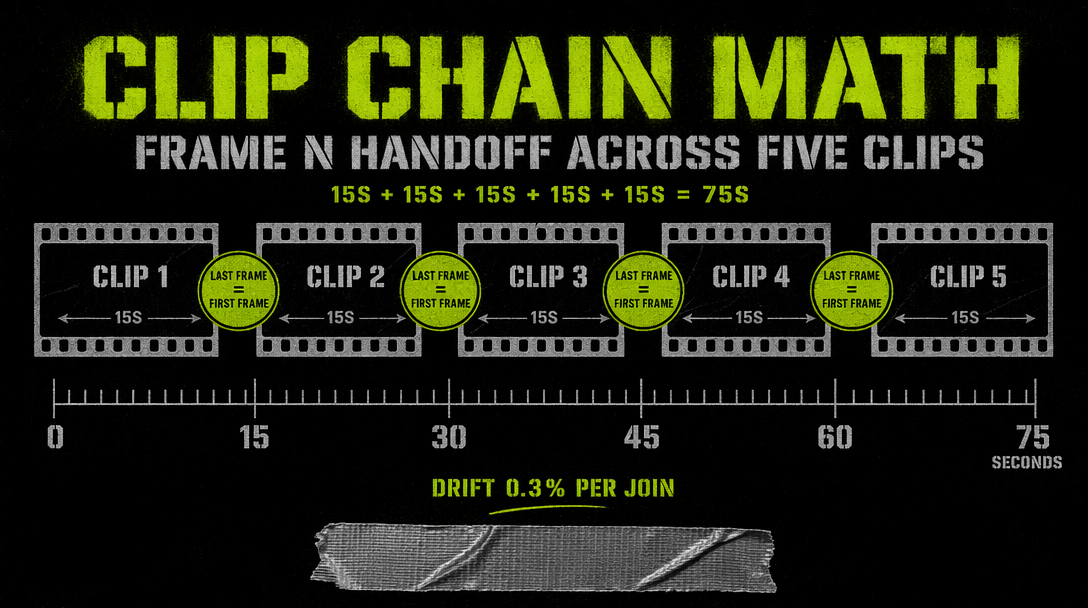

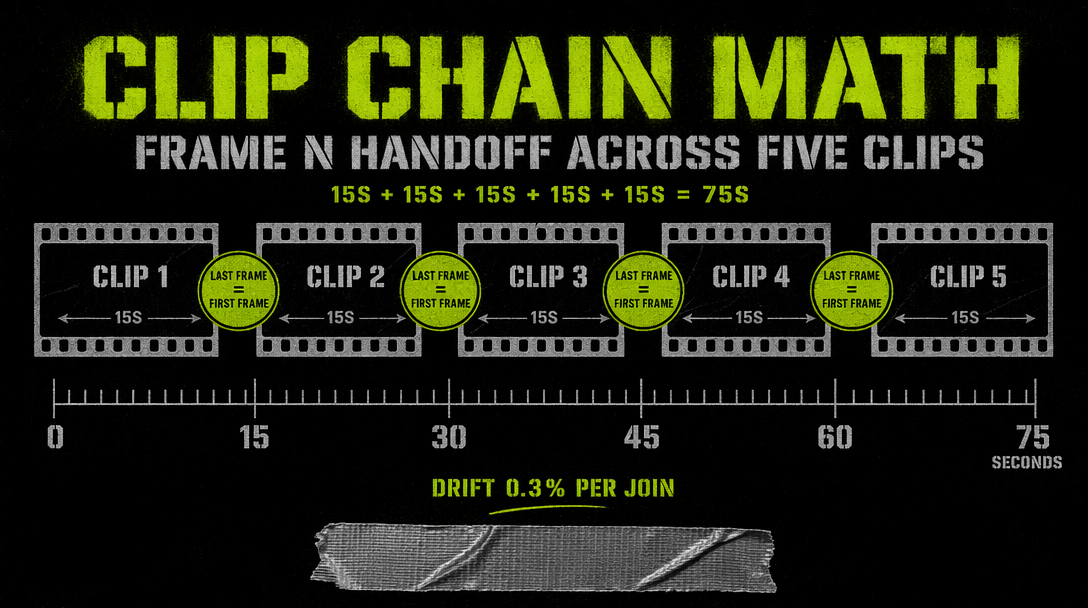

The 15-second cap on a single Grok Imagine call is a hard limit. When xAI shipped Extend from Frame in March 2026, that cap did not move. What changed is you can chain generations by handing the last frame of clip A to the model as the first frame of clip B. The result is a video that runs to whatever length you pay for, with a reasonably consistent subject across the seams.

This post covers how the feature works, how to manage drift, and when chaining past 15 seconds is worth the cost.

How the mechanic works

The base generation returns a video URL. Extend from Frame takes the last frame as a conditioning image and generates the next clip starting from it, matching color, lighting, and camera position. The endpoint on fal is xai/grok-imagine-video/image-to-video, which accepts an image URL as the starting frame.

The extract step is yours. You pull the last frame from the returned video using ffmpeg, then upload that frame to fal storage and feed the URL to the next call.

The chain is not magic. The first frame conditions the new clip but does not carry audio state, scene memory, or subject identity across shots. You still need to describe what should happen in the next clip.

Running a two-clip chain

1import { fal } from "@fal-ai/client";2import { execSync } from "child_process";34fal.config({ credentials: process.env.FAL_KEY });56const clipA = await fal.subscribe("xai/grok-imagine-video/text-to-video", {7 input: {8 prompt: "A kid on a skateboard rolls down a sunlit alley past graffiti walls, tracking shot from behind",9 resolution: "720p",10 duration: 8,11 audio: true12 }13});1415execSync(`curl -s -o clipA.mp4 ${clipA.data.video.url}`);16execSync("ffmpeg -sseof -0.1 -i clipA.mp4 -vframes 1 -q:v 2 last.jpg -y");1718const upload = await fal.storage.upload("./last.jpg");1920const clipB = await fal.subscribe("xai/grok-imagine-video/image-to-video", {21 input: {22 image_url: upload,23 prompt: "The skateboarder reaches a wide plaza, kicks the board up, catches it, looks back over his shoulder toward the camera",24 resolution: "720p",25 duration: 7,26 audio: true27 }28});2930console.log(clipB.data.video.url);

You now have 15 seconds of continuous action. Concatenate the two files with ffmpeg concat and you get a single video.

What drifts across chains

Subject identity is the main drift axis. The first frame carries appearance, but across three or four chains the model slowly reinterprets features. By clip five or six you are looking at a cousin of the original subject.

Lighting drifts next. If your prompt describes a different time of day, the model follows the prompt and breaks the continuity. Keep lighting consistent across prompts.

Ambient audio restarts each clip. For dialogue-heavy chains this is fine. For atmospheric scenes you may want to mute the model's audio and layer your own track in post.

Background detail stays stable for the first two or three clips and then starts to morph. Small signage will change words by clip four.

Cost math

Each chain clip is priced like a normal image to video call. For 720p that is $0.07 per second plus $0.002 per input frame. A 10-second 720p clip runs roughly $0.70. Chain five clips at 10 seconds each and you pay about $3.51 for 50 seconds of video.

The edit-video endpoint can polish the final output, but it auto-downscales to 854x480 and truncates to 8 seconds, so keep the chain in native 720p.

When chaining is worth it

Chaining works well for single-subject stories where the action flows forward, like a character walking through a series of environments. It also works for slow-tempo product reveals.

Chaining is not the right tool for multi-character scenes. By clip three both characters will look slightly different, which is jarring. For ensemble shots, cut to new setups.

Most short-form social posts land at 10 seconds or under, so a single generation covers them. Chaining earns its cost for narrative pieces and commercial work where the shot has to breathe.

The 15-second cap is still there, but Extend from Frame turns it into a seam rather than a wall.